We will pass the content through Beautiful Soup. The wiki_page_text variable contains the content from the page. Step 1 - Make a GET request to the Wikipedia page and fetch all the content. The first step involves scraping an entire Wikipedia page and then identifying the table that we would like to store as CSV.įor this article, we will scrape all the Tropical Cyclones of January, 2020. Now using the above information, we can scrape our Wikipedia tables. Each table data/cell is defined with a tag.Each table header is defined with a tag.Each table row is defined within a tag.The entire table is defined within tag.The above HTML code will generate the following table. Let’s dig deeper into the componenets of a table tag in HTML. We are interested in scraping the table tag of an HTML. When a web page is loaded, the browser creates a Document Object Model of the page.Īn HTML page consists of different tags - head, body, div, img, table etc. The HTML DOM is an Object Model for HTML. In order to scrape the necessary content, it is imperative that you understand HTML DOM properly. Pros and Cons of each web scraping library

The pros and cons of each of these libraries are described below. One should shoose the library that is best suited for their requirement. They are Beautiful Soup, Selenium or Scrapy.Įach of these libraries has its pro and cons of its own.

When it comes to using Python for web scraping, there are 3 libraries that developers consider for their scraping pipeline. Real Estate Listings, Job listings, price tracking on ecommerce websites, stock market trends and many more - Web Scraping has become a go to tool for each of these objectives and much more. With web scraping, one can accumulate tons of relevant data from various sources with a lot of ease, therefore, skipping on the manual effort. Every organization depends on minute analysis of various data sources in order to grow their business. Parsing rows of data from Wikipedia tableĢ1st century is the age of Data.You are going to scrape a Wikipedia table in order to fetch all the information, filter it(if necessary) and store them in a CSV.

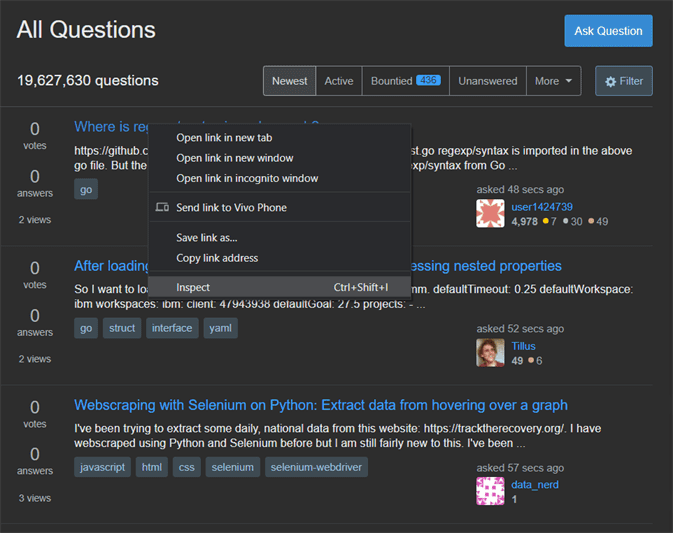

In this article you will learn to perform Web Scraping using the Beautiful Soup and Requests in Python 3.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed